What we do

The ReberLab (PI: Paul J. Reber) is a cognitive neuroscience laboratory at Northwestern University. Our research focuses on the neuroscience of cognitive expertise and the role of memory systems that supports its development.

OUR RESEARCH

SISL: perceptual-motor skill learning

Using the Serial Interception Sequence Learning (SISL) task, we aim to understand:

(A) Implicit and explicit memory contributions to perceptual-motor skill learning

(B) Operating characteristics of implicit learning, such as inflexibility, transfer, and sensory modality differences.

Cognitive Expertise through Repetition Enhanced Simulation-based training (CERES)

We are interested in improving intuitive decision making via implicit learning by accelerating training through novel protocols inspired by memory system structure

PINNACLE 2.0

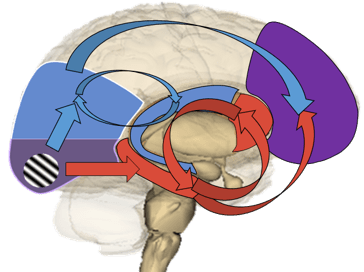

We are interested in modeling memory system interactions in intuitive decision making using our PINNACLE computational model.

Reward, cognitive control, and memory

We aim to understand how reward impacts memory.